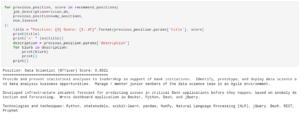

I’ve been meaning to take a closer look at llama.cpp for a while now, and recently spent some time playing with the llama-cpp-python Python bindings. I wasn’t entirely sure I was following the logic for calling functions though, so I put together a little demo script to see it work end to end. It’s available at https://github.com/ccoughlin/functions-llama-cpp-python if you’re interested.

I was mainly interested in the mechanics of functions, but if I were doing this “for real” there are a few things I’d change:

- If I stuck with DuckDuckGo as the search tool, I’d probably switch to scraping the first search result rather than just returning the result’s blurb (that’s actually why I’ve included BeautifulSoup as a dependency). The search result blurbs tend to be hit or miss in terms of returning actual weather conditions.

- The process for making the function configuration for the call to the LLM’s

create_chat_completionis a little fiddly, so I’d probably add amake_tool_configfunction. Maybe with a little introspection to automate some or all of the process. - Use some of the same tricks I use with OpenAI, including nested tools and a more thorough tool match check with redundancies in case the LLM gets creative with the tool selection.

All in all though functions in llama-cpp-python are a fairly well thought out process and worth a look. If you’d prefer something closer to OpenAI, llama-cpp-python’s web server is compatible with openai-python. The project has a demo notebook at https://github.com/abetlen/llama-cpp-python/blob/main/examples/notebooks/Functions.ipynb .